Reaping the benefits of AI while keeping healthcare risks at a minimum

Why safety should be front and centre in the roll-out of ambient voice technology

In most cases, the reassurance that AI won’t take away jobs but, instead, liberate workers from monotonous tasks so they can concentrate on high-value workflows sounds unconvincing.

Why would it make any business sense to keep the same headcount and pay employees for polishing their output when performance achieved with automation and reduced human resources can also hit the mark?

Healthcare, however, presents a fundamentally different equation. In professions such as general practice, clinical care and emergency medicine, the issue is not surplus labour but chronic overwork.

GPs, clinicians and ambulance doctors, for example, typically work longer hours than they are contracted for.

Their working week is usually 42 to 48 hours long and sometimes even consistently exceeds 50 hours, despite the EU Working Time Directive – still applicable post-Brexit – setting a 48-hour weekly average limit.

In this context, AI deployment brings relief not redundancies. By reducing administrative burden, it offers clinicians the opportunity to focus on their core responsibilities, alleviating staff shortages while also helping address burnout.

The lowest hanging fruit

A number of studies has recently focused on estimating the time doctors spend with administration. One from 2022 found that healthcare professionals are spending an average of 13.5 hours per week generating clinical documentation – a 25 per cent increase over the preceding seven years.

“Pyjama time”, a term invented to describe after-hours work spent updating electronic health records (EHRs), also suggests that this issue is deeply ingrained.

A more recent study focusing on clinical training paints an even grimmer picture, revealing that resident doctors spend up to four hours on administrative tasks for every hour of patient interaction.

This imbalance not only wastes highly specialised skills but also diminishes the quality of patient care. While doctors prepare their notes, they fix their eyes on their screens, rather than reassuring the patient through eye contact and undivided attention.

Efforts to address this burden are not new. Human scribes and automated dictation tools have long been used to support documentation workflows.

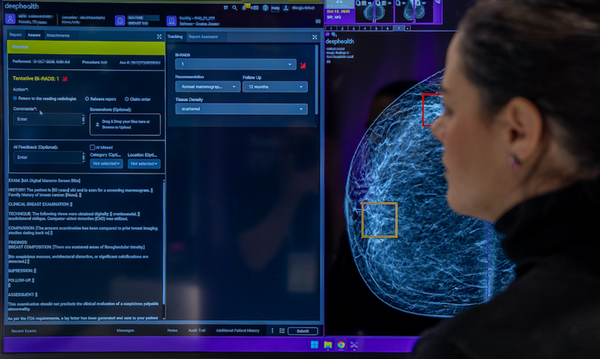

But the emergence of ambient voice technology (AVT) – a tool much more suited to the task in hand than its predecessors – marks a significant step forward. AVT is a passive listening tool that can automatically capture patient-doctor conversations and convert them into structured medical documentation.

This capability is made possible by a combination of several technologies. Speech recognition systems transcribe audio, natural language processing interprets context, and generative AI structures the conversation into coherent clinical notes – all subtasks that LLMs excel at.

A match made in heaven, as long as humans remain involved

As LLMs are part of the AVT architecture, the first risk that comes to mind is hallucinations. But a study published in September 2025 in Nature also identified omissions and contextual misinterpretations as common errors.

Encouragingly, AVT providers have made significant progress in mitigating these risks. By training models on domain-specific medical data and refining context awareness, they have reduced error rates to impressively low levels.

In a recent study analysing nearly 13,000 clinician-annotated sentences summarised by Tortus AI, the tool’s hallucination rate was 1.47 per cent, while the omission rate – when the tool left out details during text summarisation – was 3.45 per cent.

While not negligible, these figures suggest that the technology is approaching a level of reliability suitable for real-world deployment.

Another risk category is associated with patient data. To address patients’ privacy concerns, it must be ensured that the handling of their data is compliant with GDPR rules – audio data must be deleted once it has been incorporated into EHRs and mustn’t be reused for training unrelated AI models.

The opacity of these AI systems also continues to limit their full automation. As a result, current best practice endorsed by the NHS places clinicians firmly in the loop.

The caveats of NHS endorsement

In the current form of implementation supported by the NHS, the onus will be on doctors to review and validate AI-generated notes, as well as to identify any missing points or fabricated symptoms before they are finalised in medical notes.

But while some of the cognitive load remains, time savings can be considerable. The study led by Great Ormond Street Hospital Data Research, Innovation and Virtual Environments unit (GOSH DRIVE), conducted across nine NHS sites in London, offers promising evidence.

On evaluating more than 17,000 patient encounters across hospitals, GP practices, mental health services and ambulance teams, results showed a 23.5 per cent increase in direct patient interaction time during appointments supported by AVT.

Overall appointment length shrank by 8.2 per cent when AI scribes were used; A&E achieved a particularly strong 13.4 per cent increase in patients seen per shift.

While these results are inspiring, they also underline the importance of careful implementation. For best results, it’s key that doctors are provided training in using these tools efficiently and taught best practices of auditing AI-generated content.

The NHS has taken steps to support adoption too, by publishing a document titled Guidance on the use of AI-enabled ambient scribing products in health and care settings, and creating an AVT self-certified supplier registry currently containing 23 suppliers who have submitted documentation demonstrating compliance with required criteria.

It must be noted, though, that that inclusion on the registry does not constitute full clinical validation. NHS England has confirmed only that the necessary documentation has been provided, not that the technology has been independently assessed for safety or effectiveness.

As such, responsibility for successful deployment remains shared between technology providers and healthcare organisations.

For AVT to deliver on its promise, both sides must commit to rigorous standards. Developers must continue to improve model performance, accuracy and transparency, while healthcare providers must invest in assessing, localising and validating these products.

Both parties know that the stakes are high. Errors in clinical documentation or breaches of patient data could have serious consequences, delaying adoption across the sector for many years.

Conversely, a cautious, well-governed approach has the potential to unlock significant benefits.

For solution providers, it is the prerequisite of commercial success. For clinicians, this means reduced administrative burden and more manageable working hours.

But it is patients who could gain the most. Engaging with their doctors in more focused, meaningful interactions could fundamentally change the medical experience and lead to better health outcomes.

Zita Goldman

Most Viewed

Winston House, 3rd Floor, Units 306-309, 2-4 Dollis Park, London, N3 1HF

23-29 Hendon Lane, London, N3 1RT

020 8349 4363

© 2025, Lyonsdown Limited. Business Reporter® is a registered trademark of Lyonsdown Ltd. VAT registration number: 830519543